- 概要

- Terraform でデプロイ

- エラー

- The ARN isn’t valid. A valid ARN begins with arn: and includes other information separated by colons or slashes., field: null, parameter: arn:aws:firehose:ap-northeast-1:~

- InvalidArgumentException: Ths supplied prefix(es) do not satisfy the following constraint: ErrorOutputPrefix must contain at least one occurrence of !{firehose:error-output-type}

- 参考

概要

やりたいこと

Terraform で AWS WAF v2 が出すログを Parquet 形式で S3 にエクスポートする Kinesis を作成したい。

前回の続き。

過去に Parquet ではないが Kinesis を Terraform で構築した。

CloudFormation でも構築した。

Terraform でデプロイ

変数をベタ書きしているので注意

- アカウント ID : 111122223333

- バケット名 : tf-waf-test-bucket

- glue db 名 : aws-waf-terraform-db

- glue table 名 : aws-waf-terraform-table

- kinesis 名 : aws-waf-logs-terraform-kinesis-firehose

S3

AWS WAF ログをエクスポートする用バケット作成。

resource "aws_s3_bucket" "bucket" {

bucket = "tf-waf-test-bucket"

acl = "private"

}

S3 バケットが作成されていること。

Glue

Parquet 形式の Database, Table(Datacatalog) を作成する。

resource "aws_glue_catalog_database" "aws_glue_catalog_database" {

name = "aws_waf_terraform_db"

}

resource "aws_glue_catalog_table" "aws_glue_catalog_table" {

name = "aws_waf_terraform_datacatalog"

database_name = "aws_waf_terraform_db"

table_type = "EXTERNAL_TABLE"

parameters = {

EXTERNAL = "TRUE"

#"parquet.compression" = "SNAPPY"

classification = "parquet"

}

storage_descriptor {

location = "s3://tf-waf-test-bucket/WAFLOG/" #指定する

input_format = "org.apache.hadoop.hive.ql.io.parquet.MapredParquetInputFormat"

output_format = "org.apache.hadoop.hive.ql.io.parquet.MapredParquetOutputFormat"

ser_de_info {

name = "my-stream"

serialization_library = "org.apache.hadoop.hive.ql.io.parquet.serde.ParquetHiveSerDe"

parameters = {

"serialization.format" = 1

}

}

columns {

name = "timestamp"

type = "bigint"

}

columns {

name = "formatversion"

type = "int"

}

columns {

name = "webaclid"

type = "string"

}

columns {

name = "terminatingruleid"

type = "string"

}

columns {

name = "terminatingruletype"

type = "string"

}

columns {

name = "action"

type = "string"

}

columns {

name = "terminatingrulematchdetails"

type = "array<struct<conditiontype:string,location:string,matcheddata:array<string>>>"

}

columns {

name = "httpsourcename"

type = "string"

}

columns {

name = "httpsourceid"

type = "string"

}

columns {

name = "rulegrouplist"

type = "array<struct<rulegroupid:string,terminatingrule:struct<ruleid:string,action:string>,nonterminatingmatchingrules:array<struct<action:string,ruleid:string>>,excludedrules:array<struct<exclusiontype:string,ruleid:string>>>>"

}

columns {

name = "ratebasedrulelist"

type = "array<struct<ratebasedruleid:string,limitkey:string,maxrateallowed:int>>"

}

columns {

name = "nonterminatingmatchingrules"

type = "array<struct<ruleid:string,action:string>>"

}

columns {

name = "httprequest"

type = "struct<clientIp:string,country:string,headers:array<struct<name:string,value:string>>,uri:string,args:string,httpVersion:string,httpMethod:string,requestId:string>"

}

}

partition_keys {

name = "year"

type = "string"

}

partition_keys {

name = "month"

type = "string"

}

partition_keys {

name = "day"

type = "string"

}

partition_keys {

name = "hour"

type = "string"

}

}

terraform apply

Glue コンソールより、DB と Table が作成されていること。

Kinesis Firehose 用 IAM Role

Kinesis には以下 3 つの権限が必要。

resource "aws_iam_role" "firehose_role" {

name = "firehose_test_role"

assume_role_policy = <<EOF

{

"Version": "2012-10-17",

"Statement": [

{

"Action": "sts:AssumeRole",

"Principal": {

"Service": "firehose.amazonaws.com"

},

"Effect": "Allow",

"Sid": ""

}

]

}

EOF

}

resource "aws_iam_policy" "firehose_policy" {

name = "terraform_firehose_policy"

path = "/"

policy = <<EOF

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"glue:GetTable",

"glue:GetTableVersion",

"glue:GetTableVersions"

],

"Resource": "*"

},

{

"Effect": "Allow",

"Action": [

"s3:AbortMultipartUpload",

"s3:GetBucketLocation",

"s3:GetObject",

"s3:ListBucket",

"s3:ListBucketMultipartUploads",

"s3:PutObject"

],

"Resource": [

"arn:aws:s3:::tf-waf-test-bucket",

"arn:aws:s3:::tf-waf-test-bucket/*"

]

},

{

"Effect": "Allow",

"Action": [

"kinesis:DescribeStream",

"kinesis:GetShardIterator",

"kinesis:GetRecords",

"kinesis:ListShards"

],

"Resource": "arn:aws:kinesis:ap-northeast-1:111122223333:stream/aws-waf-logs-terraform-kinesis-firehose"

},

{

"Effect": "Allow",

"Action": [

"kms:Decrypt",

"kms:GenerateDataKey"

],

"Resource": [

"arn:aws:kms:ap-northeast-1:111122223333:key/%SSE_KEY_ID%"

],

"Condition": {

"StringEquals": {

"kms:ViaService": "s3.ap-northeast-1.amazonaws.com"

},

"StringLike": {

"kms:EncryptionContext:aws:s3:arn": "arn:aws:s3:::tf-waf-test-bucket/prefix*"

}

}

},

{

"Effect": "Allow",

"Action": [

"logs:PutLogEvents"

],

"Resource": [

"arn:aws:logs:ap-northeast-1:111122223333:log-group:log-group-name:log-stream:log-stream-name"

]

},

{

"Effect": "Allow",

"Action": [

"lambda:InvokeFunction",

"lambda:GetFunctionConfiguration"

],

"Resource": [

"arn:aws:lambda:ap-northeast-1:111122223333:function:%FIREHOSE_DEFAULT_FUNCTION%:%FIREHOSE_DEFAULT_VERSION%""

]

}

]

}

EOF

}

resource "aws_iam_role_policy_attachment" "firehose_iam" {

role = aws_iam_role.firehose_role.name

policy_arn = aws_iam_policy.firehose_policy.arn

}

terraform apply

IAM Role が作成されていること。

Kinesis

Resource: aws_kinesis_firehose_delivery_stream

resource "aws_kinesis_firehose_delivery_stream" "extended_s3_stream" {

name = "aws-waf-logs-terraform-kinesis-firehose"

destination = "extended_s3"

extended_s3_configuration {

role_arn = aws_iam_role.firehose_role.arn

bucket_arn = aws_s3_bucket.bucket.arn

prefix = "WAFLOG/year=!{timestamp:yyyy}/month=!{timestamp:MM}/day=!{timestamp:dd}/hour=!{timestamp:HH}/"

error_output_prefix = "ERRORLOG/!{firehose:error-output-type}/year=!{timestamp:yyyy}/month=!{timestamp:MM}/day=!{timestamp:dd}/hour=!{timestamp:HH}/"

buffer_size = 128

data_format_conversion_configuration {

input_format_configuration {

deserializer {

open_x_json_ser_de {}

}

}

output_format_configuration {

serializer {

parquet_ser_de {}

}

}

schema_configuration {

database_name = aws_glue_catalog_table.aws_glue_catalog_table.database_name

role_arn = aws_iam_role.firehose_role.arn

table_name = aws_glue_catalog_table.aws_glue_catalog_table.name

region = "ap-northeast-1"

}

}

}

}

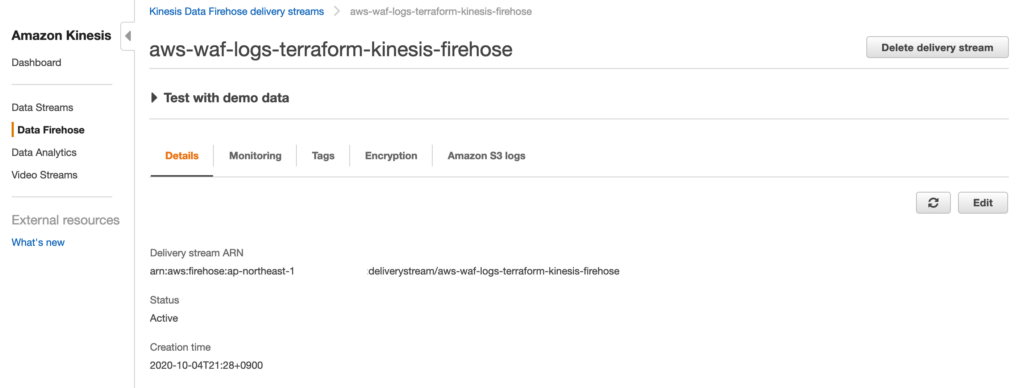

Kineis Firehose が作成されていること。

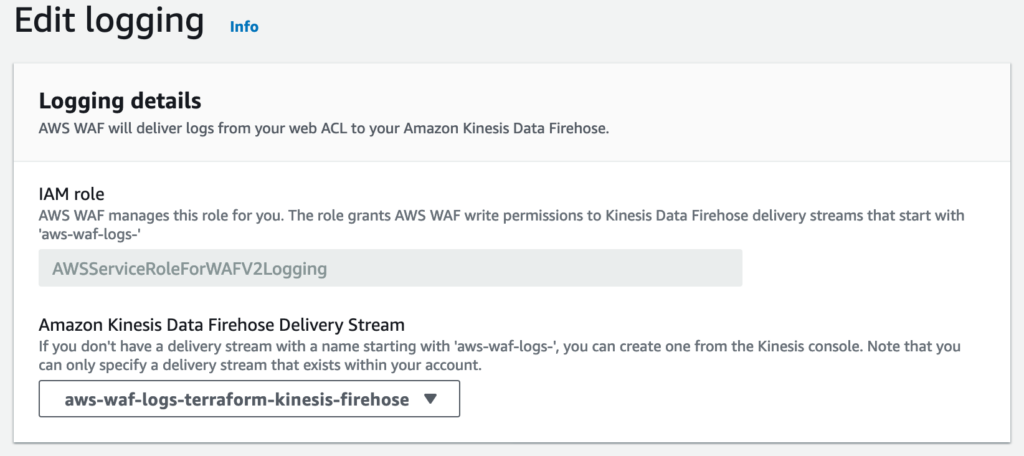

AWS WAF Logging

作成した Kinesis を利用して AWS WAF にロギング設定を行う。

WAF は前回作ったもの。

resource "aws_wafv2_web_acl_logging_configuration" "example" {

log_destination_configs = [aws_kinesis_firehose_delivery_stream.extended_s3_stream.arn]

resource_arn = aws_wafv2_web_acl.example.arn

}

ロギング設定がされていること。

CloudFormation では WAF の Logging 設定は手動で行う必要があった。Terraform は優秀。

Athena で検索

S3 にはこのような形で出力される。

Athena で検索するには Glue Crawler などでテーブルを作成する必要があった。

(上で作った Glue Table では値が取得できなかった。。)

エラー

The ARN isn’t valid. A valid ARN begins with arn: and includes other information separated by colons or slashes., field: null, parameter: arn:aws:firehose:ap-northeast-1:~

Kinesis Firehose の名前に「 aws-waf-logs- 」を付けていなかったため。

InvalidArgumentException: Ths supplied prefix(es) do not satisfy the following constraint: ErrorOutputPrefix must contain at least one occurrence of !{firehose:error-output-type}

最初はこう書いていた。

error_output_prefix = "ERRORLOG//year=!{timestamp:yyyy}/month=!{timestamp:MM}/day=!{timestamp:dd}/hour=!{timestamp:HH}/"

Error Output Type を指定。

error_output_prefix = "ERRORLOG/!{firehose:error-output-type}/year=!{timestamp:yyyy}/month=!{timestamp:MM}/day=!{timestamp:dd}/hour=!{timestamp:HH}/"

コメント